If people with accents or speech impairments are less well “understood” by AI used in hiring, this would constitute discrimination based on disability and national origin, which is illegal in the U.S.

Artificial Intelligence (AI) is now being used in every aspect of the “employee life cycle”—from hiring to firing. For hiring, many companies utilize one-way AI-based interview tools, which send questions to job seekers’ devices and have them record their answers without a human on the other side.

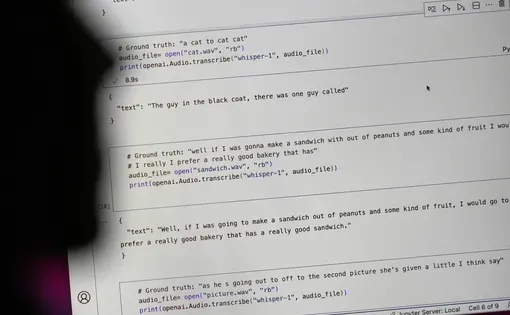

The software then transcribes what job applicants say in the video interviews to text. The AI compares the text to job interviews of current employees, who are deemed successful. If applicants use similar words as current employees have used in their job interviews, they will get a favorable score. If they have less overlap with current employees, they are going to get rejected by the AI.

What has rarely been discussed and never studied is if the underlying technology, the speech-to-text transcription, is actually treating everyone fairly and equally. If the speech-to-text transcription process produces a significantly higher “Word Error Rate” (WER) for speakers with accents or speech impairments versus native speakers without speech impairments, this may lead to applicants getting unfairly rejected.

This research is of vital importance, because the results could prove significant flaws in the speech-to-text transcription systems that underpin the AI used in hiring. If the study shows that these tools discriminate against people with accents and speech impairments, this would be illegal in the U.S.

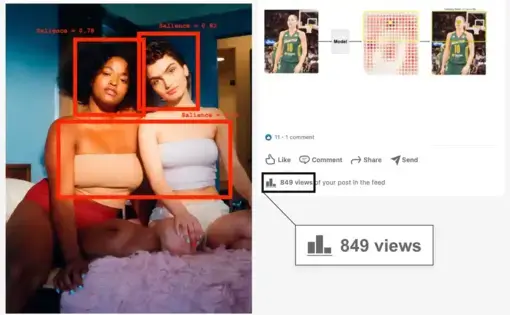

AI Algorithms Objectify Women's Bodies

This project also investigates gender bias by algorithms used by some of the largest platforms, including Google and Microsoft. Our research shows that these algorithms tag photos of women in everyday situations as racy or sexually suggestive at higher rates than images showing men in similar situations. As a result, the social media companies that leverage these algorithms have suppressed the reach of countless images featuring women’s bodies, and hurt female-led businesses—further amplifying societal disparities.