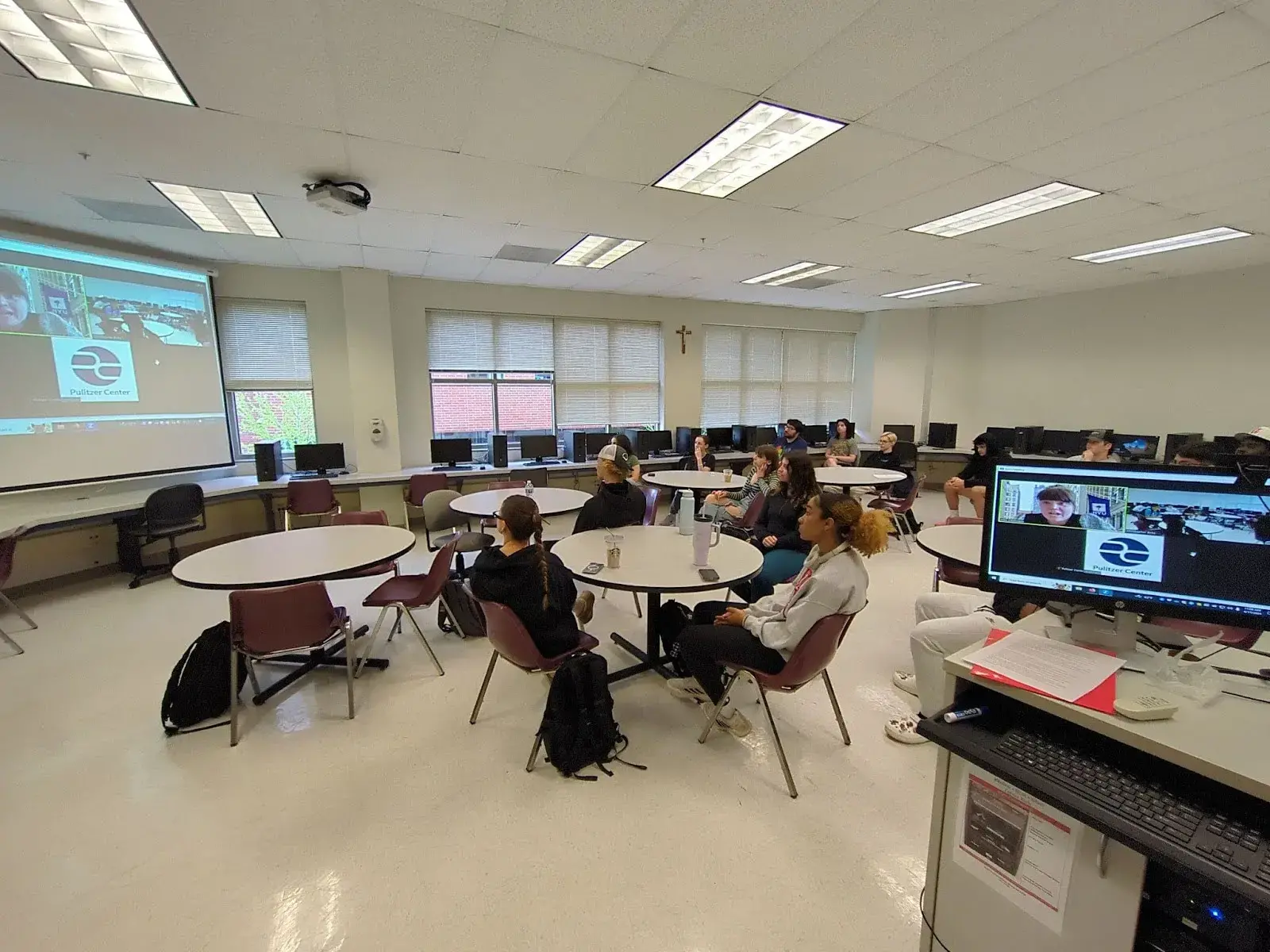

Pulitzer Center grantee and 2022 AI Accountability Fellow Hilke Schellmann visited Benedictine University virtually on April 17, 2024. Schellmann, author of The Algorithm, completed a Pulitzer Center AI Accountability project on the proliferation and effects of automated hiring tools.

“A lot of journalists when they cover artificial intelligence feel outmatched,” she said. "They rely on the companies.”

Third-party testing, experts, and strong sourcing, she said, remain strong accountability measures. Describing “what a technology does [and doesn’t] do” is a good check on what companies say it does.

Some of “these people [at companies using and/or making AI tools] were almost waiting for a reporter to call them,” she said.

Schellmann described startup environments where investors expect rapid returns and leadership turns to AI tools to cut costs quickly. Some employers have been overwhelmed by increased application volumes. “It’s not that I think these companies are really evil,” she said, “they don’t understand the implications of their technology.”

How “is the technology ever going to get better if we don’t know what the problems are?” she asked.

One such technology is affective computing, which is advertised by companies like HireVue. The technology purports to score tone and facial expressions during an interview. These scores, according to the company, can be tuned to open roles—many of which are customer-facing. English-language systems rendered passing scores to Schellmann and a student researcher when they read Wikipedia pages in German and Chinese.

“There’s no science,” she said, to companies’ claims that there are “six basic human emotions” that may be mapped to facial expressions. And while HireVue and its customers told Schellmann that scores do not automatically disqualify candidates, in The Algorithm, she shows that some employers do just that.

Schellmann also discussed the value of curiosity, how to adapt to digital job hunting, and the future of work with students. Finding new stories “comes from talking to people.” A Lyft driver introduced Schellmann to the phenomenon of automated interviews and gamified competency tests.

“You never know where a spark or an interesting conversation can start,” she said. Connecting with sources, she said, is often an exercise in “triangulation”: finding mutual connections, using social media, and persistence.

Her job as a journalist is to “ris[e] above [...] sources” and tell the most-complete and -compelling story she can. Fact-checking and verification take on even greater importance when writing about AI: Schellmann quipped, “Your mom loves you; have you fact-checked that?”

“Test the tools yourself,” she told students. Resume parsers and automated interview software often offer free trials. She noted that some states like California entitle residents to greater transparency about where their personal data is going and how companies and brokers use them.

“It matters how we get the job,” she said. “This is not how we should do hiring. The law is pretty clear in the United States: You’re supposed to look at the ability and skills you need for a job.”